Dynamic Programming & Geo-Spatial Analysis

Dynamic Programming (DP) has been the focus of my interview prep for the past two weeks, and I’ll be honest—I really struggled with it. It has been this scary, constantly changing algorithm that I wasn’t really able to pin down or repeat.

The breakthrough came when I realized: it’s not an algorithm, it’s not even code. It’s a design approach. It’s about solving the easier problems first and then approaching the whole as a sum.

The Two Main Approaches

At its core, DP splits problems up into one of two main categories.

1. Top-Down (Memoization)

This is the "lazy" approach. You start with the big problem and break it down recursively.

- How it works: First, check if the sub-problem has already been solved. If yes, use the stored answer. If no, solve it and save the result for later.

2. Bottom-Up (Tabulation)

This is the "eager" approach. You start with the smallest possible piece of the puzzle and iteratively build up to the final answer.

- How it works: Solve the base cases first, filling up a table (or array) until you reach the main solution.

When Should DP Be Used?

First thing I wanted to figure out is when is DP applicable, as trying to use it when it’s not is a huge time sink. The test for DP isn’t straightforward either; it’s very easy to confuse it for simpler approaches (like 2-pointer or sliding window) and vice-versa.

Here’s what I ask myself to try and nail down if DP is the approach:

- Overlapping Subproblems: Does the problem require you to calculate the same thing over and over? If so, DP can save runtime by caching those results.

- Optimal Substructure: This is the most critical part. It means the optimal solution to the main problem can be constructed from the optimal solutions of its sub-problems.

Consider the Assembly Line Scheduling Problem

Imagine two parallel lines of machines running in a factory. Each machine in each line takes a particular amount of time to run, and moving between the lines also takes time. How can you process an item the fastest?

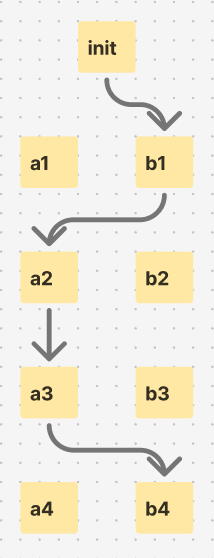

Let’s map out a possible route, ignoring any actual numeric values:

The 4th stage in processing gets reached optimally by starting out with B and then switching to A for 2 steps and back to B for the 4th. Let’s say this same process is actually finished after stage 3, what’s the optimal path then?

It’s pretty clear that it’s the same path: B → A → A.

The quickest path to the 4th layer is the quickest path to the 3rd layer, plus the transition to the 4th. This is a clear display of the sub-structure aspect of DP: the optimal solution is built from each sub-problem.

There is variation within DP. For example, maybe we’re not trying to find the quickest path but rather a specific time value instead. This comes up a lot in DP questions. Finding min-max is easy, but finding "where time = T" paths is much more interesting and normally requires a better understanding of Data Structures & Algorithms. You’d have to add some kind of storage, a cache of path:time values, and reference it as you test for the target value, pruning the search field as you go using DP principles.

🔬 Weekly Niche Breakdown: Geo-Spatial Analysis in Urban Settings

The Problems

- Data Silos: Separation of Urban setting data is common. Most companies tend to gather and use their own data (utility, maps, political), creating fragmentation.

- GPS Limitations: In more dense urban settings (tall buildings), standard GPS doesn’t cut it for traffic routing. Especially in shipping where there are too many unaccounted-for variables (loading zones, construction, events).

- Commercial Viability: Geo-Spatial tech is widely applicable—from non-profits and small businesses to global militaries—everyone could use a better understanding of the world from above. It’s just making it commercially usable that seems to be the problem, especially when GPS is so available and supported.

More niche sub-areas to look into further:

- Isolated Climate Monitoring: Monitoring small areas' heat signatures (think suburbs made with asphalt vs. ones that have good shade). This difference could be a viable way to assess cooling costs, a rising concern as drought and weather become more severe and common.

- Shadow Analysis: Crucial for both solar energy planning and urban agriculture.

Ideas for possible software solutions (regardless of size):

- "Zoning-Checker" API: A small API that aggregates local municipal zoning PDF maps into a searchable API. Real estate investors could query an address and instantly get the zoning classification (commercial, residential, mixed-use) without hiring a surveyor or digging through records themselves.

- Hyper-Local Foot Traffic Analyzer: A tool that uses public mobile data or public transit exit data to visualize foot traffic patterns for small retail business owners. This is common in large-scale retail, but nothing exists for the smaller scale.

How I can tap into this potential (Solo-Dev / Small Team):

- Building on top of OSM: OpenStreetMap (OSM) is powerful. A lot of the work that needs to be done is just bridging gaps between available open source tech and consumers.

- Open Source: Kind of the same sentiment, but contributing to Open-Source projects on top of OSM might be the most impactful way to use this tech right now.